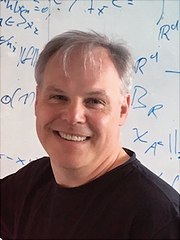

Prof. Dr. Armin Iske

Professor für Numerische Approximation

Anschrift

Universität Hamburg

Fakultät für Mathematik, Informatik und Naturwissenschaften

Fachbereich Mathematik

AM – Angewandte Mathematik

Bundesstraße 55

20146 Hamburg

Büro

Raum: 136

Kontakt

E-Mail: armin.iske"AT"uni-hamburg.de

Sekretariat

Weitere Informationen sind auf meiner englischsprachigen persönlichen Seite.